TraceRTL: Agile Performance Evaluation for Microarchitecture Exploration

DOI:

https://doi.org/10.66834/5s84yp08Keywords:

Trace-driven simulation, Performance evaluation, Cross ISA benchmarkingAbstract

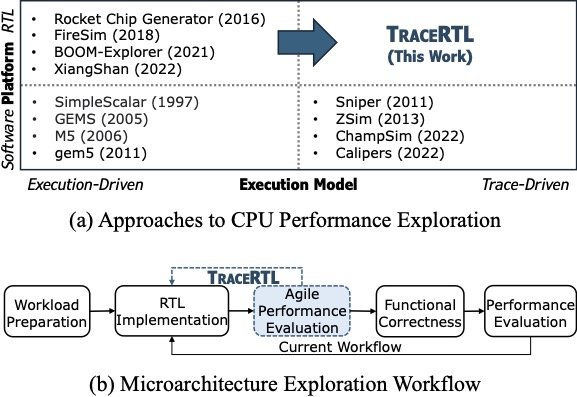

While agile chip development methodologies have accelerated RTL design and simulation, performance evaluation remains constrained by challenges:

(1) limited benchmarks availability due to incomplete peripheral/software simulation environments or unavailable source code;

(2) inefficient feature prototyping caused by the tight coupling between functional correctness and performance evaluation, particularly for large‑scale, error‑prone microarchitectures.

To address these challenges, we propose TraceRTL, an agile, trace-driven performance evaluation methodology that decouples the functional and performance components of CPU RTL designs.

It introduces three contributions to benchmarking community: (1) a trace‑driven exploration framework that bypasses full functional correctness while preserving performance behavior and supports to replay workload traces on RTL designs; (2) a quantitative analysis and mitigation methodology to identify and reduce trace-driven performance discrepancies; (3) a trace transformation technique, TraceBridge, that replays benchmark traces across different formats and instruction sets.

Using TraceRTL, we develop the first trace-driven RTL CPU derived from XiangShan, a high-performance out-of-order RISC-V processor.

TraceRTL achieves performance accuracies of 99.87% and 99.86% on SPECint2017 and SPECfp2017, respectively.

With TraceBridge, we evaluate x86-based Google workload traces on a RISC-V RTL CPU and reveal distinct memory-bound behavior.

References

1. Doug Burger and Todd M. Austin. The simplescalar tool set, version 2.0. SIGARCH Comput. Archit. News, 25(3):13–25, June 1997. doi:10.1145/268806.268810. DOI: https://doi.org/10.1145/268806.268810

2. Milo M. K. Martin, Daniel J. Sorin, Bradford M. Beckmann, Michael R. Marty, Min Xu, Alaa R. Alameldeen, Kevin E. Moore, Mark D. Hill, and David A. Wood. Multifacet’s general execution-driven multiprocessor simulator (gems)

toolset. SIGARCH Comput. Archit. News, 33(4):92–99, November 2005. doi:10.1145/1105734.1105747. DOI: https://doi.org/10.1145/1105734.1105747

3.N.L. Binkert, R.G. Dreslinski, L.R. Hsu, K.T. Lim, A.G. Saidi, and S.K. Reinhardt. The m5 simulator: Modeling networked systems. IEEE Micro, 26(4):52–60, 2006. doi: 10.1109/MM.2006.82. DOI: https://doi.org/10.1109/MM.2006.82

4. Nathan Binkert, Bradford Beckmann, Gabriel Black, Steven K. Reinhardt, Ali Saidi, Arkaprava Basu, Joel Hestness, Derek R. Hower, Tushar Krishna, Somayeh Sardashti, Rathijit Sen, Korey Sewell, Muhammad Shoaib,

Nilay Vaish, Mark D. Hill, and David A. Wood. The gem5 simulator. SIGARCH Comput. Archit. News, 39(2):1–7, August 2011. doi:10.1145/2024716.2024718. DOI: https://doi.org/10.1145/2024716.2024718

5. Trevor E. Carlson, Wim Heirman, and Lieven Eeckhout. Sniper: exploring the level of abstraction for scalable and accurate parallel multi-core simulation. In Proceedings of 2011 International Conference for High Performance DOI: https://doi.org/10.1145/2063384.2063454

Computing, Networking, Storage and Analysis, SC ’11, New York, NY, USA, 2011. Association for Computing Machinery. doi:10.1145/2063384.2063454. DOI: https://doi.org/10.1145/2063384.2063454

6.Daniel Sanchez and Christos Kozyrakis. Zsim: fast and accurate microarchitectural simulation of thousand-core systems. In Proceedings of the 40th Annual International Symposium on Computer Architecture, ISCA ’13, page 475–486, New York, NY, USA, 2013. Association for Computing Machinery. doi:10.1145/2485922.2485963. DOI: https://doi.org/10.1145/2485922.2485963

7.Nathan Gober, Gino Chacon, Lei Wang, Paul V. Gratz, Daniel A. Jimenez, Elvira Teran, Seth Pugsley, and Jinchun Kim. The championship simulator: Architectural simulation for education and competition, 2022. URL: https://arxiv.org/abs/2210.14324, arXiv:2210.14324.

8.Hossein Golestani, Rathijit Sen, Vinson Young, and Gagan Gupta. Calipers: a criticality-aware framework for modeling processor performance. In Proceedings of the 36th ACM International Conference on Supercomputing, ICS ’22, New York, NY, USA, 2022. Association for Computing Machinery. doi:10.1145/3524059.3532390. DOI: https://doi.org/10.1145/3524059.3532390

9.Tony Nowatzki, Jaikrishnan Menon, Chen-Han Ho, and Karthikeyan Sankaralingam. Architectural simulators considered harmful. IEEE Micro, 35(6):4–12, 2015. doi: 10.1109/MM.2015.74. DOI: https://doi.org/10.1109/MM.2015.74

10.Cbp2025 simulator framework. https://ericrotenberg. wordpress.ncsu.edu/cbp2025-simulator-framework/, 2025.

Published

Issue

Section

License

Copyright (c) 2026 The Authors. Published by BenchCouncil Press on Behalf of International Open Benchmark Council

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.